Collaborators Create Cyberbullying Early-Response Tool

Researchers Focus Efforts on Instagram

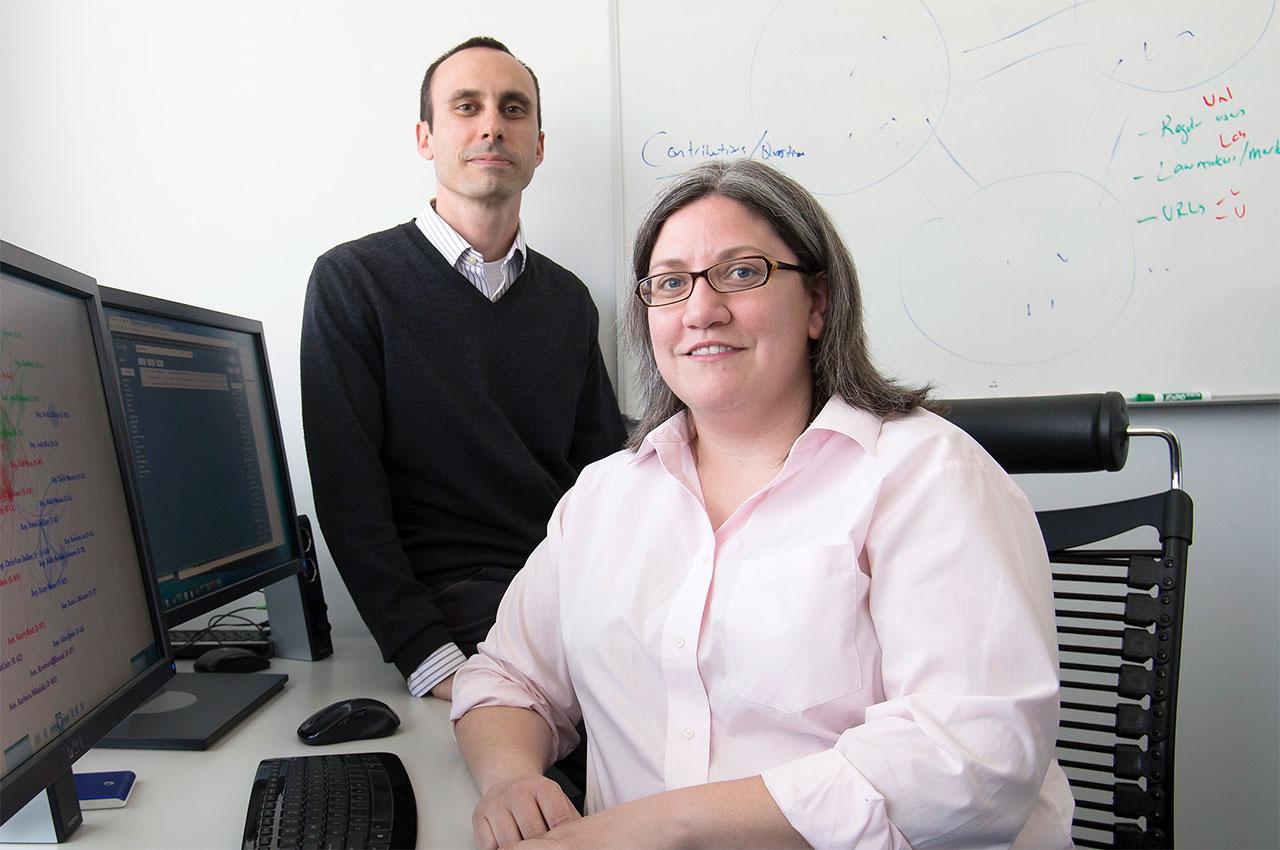

One in three United States teens experiences cyberbullying, which can lead to violence, depression, and substance abuse. Focusing on Instagram, Aron Culotta, director of the Text Analysis in the Public Interest Lab and assistant professor of computer science at Illinois Tech, and Libby Hemphill, director of the Resource Center for Minority Data and associate professor of information at the University of Michigan, have been working to predict cyberbullying and intervene before it escalates. The researchers and two of their students have created a tool that uses linguistic and social features from earlier comments to forecast hostility presence and intensity on Instagram. The tool can predict cyberbullying up to 10 hours before it will occur, giving schools, parents, law enforcement officials, and others time to intervene.

Culotta and Hemphill chose to focus on cyberbullying among teenagers. Interviewing stakeholders such as parents, school administrators, and police, Culotta and Hemphill learned that their top cyberbullying concerns included situations in which teens who know each other get into offline fights that escalate online and can result in physical altercations.

“I was drawn to this research topic both because of the technical challenge of designing algorithms to understand nuanced language patterns and also because of the societal importance of preventing online harassment,” says Culotta. “Unlike traditional bullying, cyberbullying can’t be avoided by walking away; our digital lives follow us home.”

The two created a natural language processing algorithm to comb through some 15 million Instagram comments from more than 400,000 Instagram posts. They also manually annotated 30,000 comments for the presence of cyberbullying to train and validate their forecasting models. Their best model was highly accurate (AUC .82) at predicting cyberbullying based on earlier comments in a post, with cues such as patterns of hostile conversations and certain key words and acronyms that often precede cyberbullying, for example, gender-specific hostile terms or insulting acronyms.

While most prior work in cyberbullying has focused on identifying hostile messages after they have been posted, Culotta and Hemphill’s work instead forecasts the presence and intensity of future hostile comments, making it more useful as an intervention tool. The two created a web interface for their tool and plan to engage parent groups to opt into the application. This project was originally funded by the Nayar Prize at Illinois Tech.