Virtual Reality Brings Construction Sites to the Classroom

Illinois Institute of Technology Assistant Professor of Civil and Architectural Engineering Ivan Mutis has received $851,433 across two National Science Foundation awards for projects that explore the application of mixed reality to engineering education.

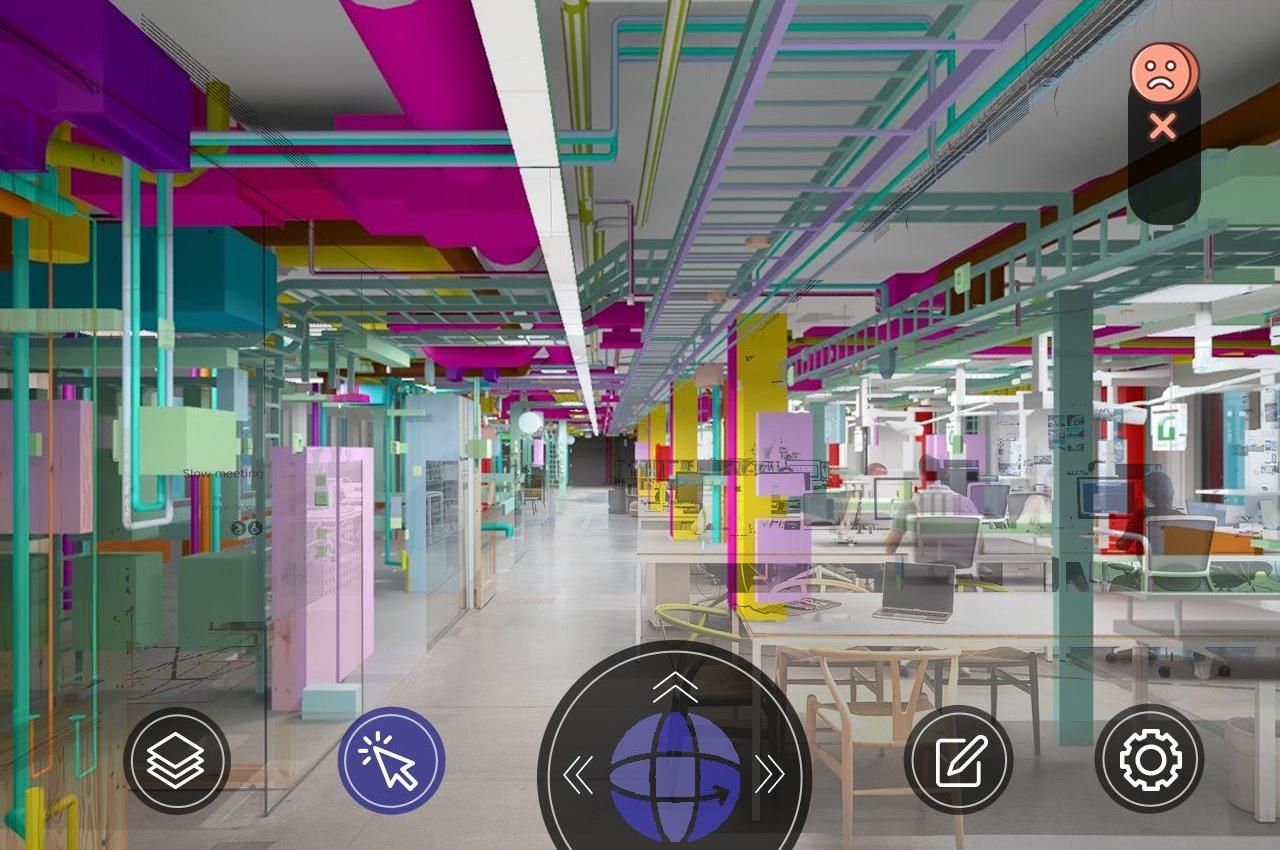

“Mixed reality overlays virtual content directly into the user’s view while also enabling the ability to manipulate [virtual] objects,” says Mutis.

Mutis says this type of immersive holographic space will be ubiquitous within the next decade.

“Big technology companies such as Apple and Meta are making huge investments in virtual reality, and they have faith that this is the future line of work, interacting, exchanging, and communicating,” he says.

Mutis, who also piloted the Department of Civil, Architectural, and Environmental Engineering’s Drones for Construction Projects course and is a director of the iConSenSe Laboratory, is creating a broad mixed reality platform that lets students virtually engage with the infrastructure of a real building, including structural elements such as support beams, electrical systems, water systems, and more.

His initial focus with the project is supporting students who are learning construction engineering.

Over the course of their degree program, many students will have the opportunity to visit construction sites through experiences such as internships. While highly valuable these experiences cannot provide direct experience with the whole range of scenarios that a student should be familiar with around construction.

Current classroom activities tend to aim to bridge that gap by having students work with computer simulations or building information modeling (BIM), but Mutis says that when it comes to learning design interpretation, there are better options.

“We want to immerse learners in a simulated physical world that enables them to experience the sense of relative proportions of the elements that you observe in designs,” says Mutis. “The immersive environment technology facilitates awareness of the component’s functionality within an engineering system itself.”

For example, within the mixed reality environment, a student may encounter a building’s support beam. In real life, students could look at the outside of the beam from the ground level. In the virtual environment, they could explore it from all angles and slide it out of place to reveal how it’s connected to nearby components at scale.

“This provides a form of exploration to enlighten the learner about design components, interactions, and relationships, and the dependencies or possible conflicts of physical space,” says Mutis.

Through a second NSF award, Mutis, Associate Professor of Computer Science Gady Agam, and Assistant Professor of Psychology Kristina Bauer are investigating how students learn in the mixed reality environment and are developing machine learning algorithms to provide individualized learning experiences tuned to a student’s needs.

“We model to achieve that ‘Aha!’ eureka moment, where you say, ‘I know what this means.’ But that moment is much different from one person to the other, and that’s something that is not recognized within the general context in engineering education,” says Mutis.

Mutis says he expects the results to be broadly beneficial to the study of how people learn.

“Research on learning with technologies involving the influence of individual cognitive function is relatively unexplored,” says Mutis. “There remains a gap in knowledge on how or why learners arrive at different results in the learning process.”

By adding an artificial intelligence layer to the mixed reality experience, the software can adapt a student’s experience in order to guide them toward the moment of understanding.

For example, the program may provide more visual cues or prompts if it determines that will help guide a student into that ‘Aha’ moment.

Mutis says he hopes that demonstrating how virtual and mixed reality can be adapted to the needs of a user will provide a foundation for other developers to do the same as the field grows.

“This lays the foundation for developers at companies to say, ‘We do really need to incorporate individual differences in the users, and to develop the artificial intelligence (AI) models, to better develop these platforms,’” says Mutis. “We see the importance of expanding the universe of possibilities that this technology will provide in the future, not only for education but also for other ways of working and interacting.”

This project is in the first stage of development at the Trimble Technology Laboratory, of which Mutis is director.

Photo: Rendering demonstrating the use of mixed reality in the classroom environment (provided)